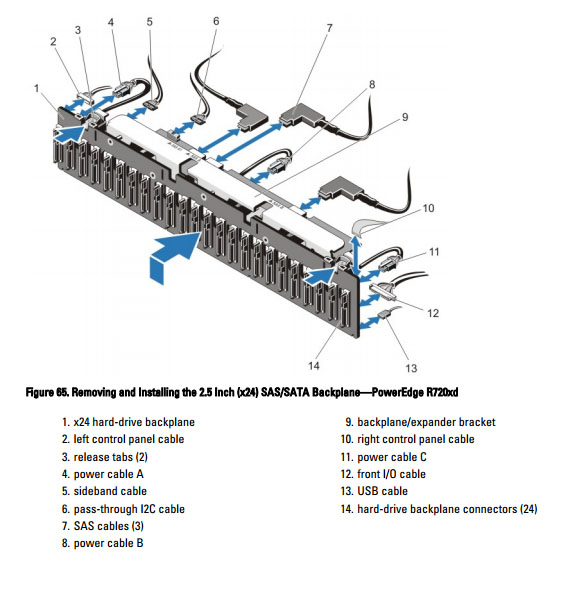

| Background In trying to outfit my Dell R720 with solid state drives I quickly ran into a limitation of the backplane. The backplane provides connectivity for 26 drives. 24 drives share 2 SFF-8087 connectors. A third SFF-8087 connects the backplane to a subplane in the back that has an additional 2 drives. I am not sure if the net result is 2x13 drives or 12 drives on one connection and then 14 on the second, but either way it was bad and had to go. Each SFF-8087 gives you four ports of SAS 6Gbps bandwidth. Essentially that means this entire configuration could support 8 SSD's maximum theoretical bandwidth. In Dell's defense I can imagine their intention was for spinning drives, and I am sure their solution is adequate for 26 platter drives.

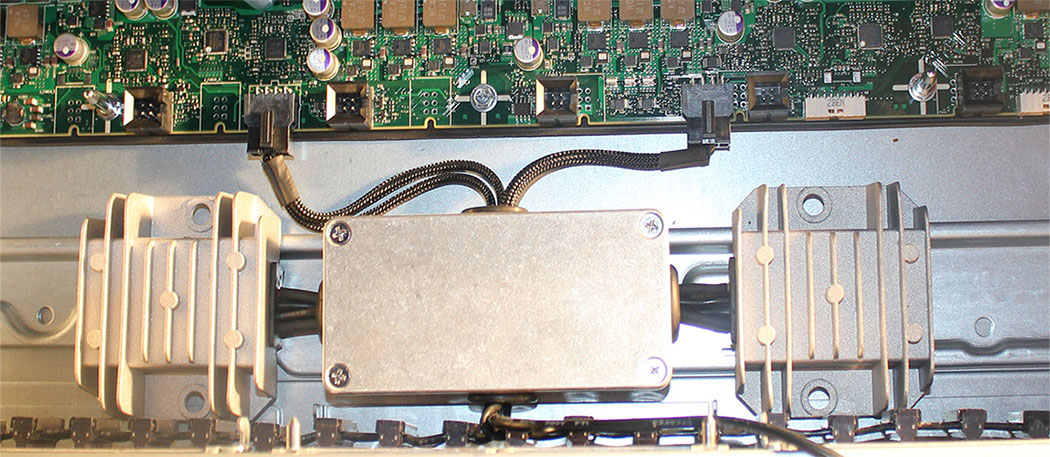

As you can see from the above image we are not just dealing with the drives when talking about the backplane. My first concern was I had to determine what would work if the backplane was removed. Modification I removed the backplane and immediately ran into my first problem, I could not turn on the server. The power button is connection #2 in the picture. For whatever reason Dell ran the power button to the backplane and not the motherboard. Fortunately I have an iDrac in the system and was able to power on the server via the web interface. The system then booted to the point that no operating system was detected. Since there was no hard stop during the boot process complaining about the backplane missing I moved forward. The next issue was power. Everything in the server was 12v. Solid state drives run on 5v so I needed to find a good power source. The backplane itself performed the 12v to 5v conversion. Initially I was going to try to keep the backplane in place, move the drives forward in the caddies (there is a second mounting location on the caddy that would have netted me .5" of clearance) and try to tap the power from the backplane while snaking a sata cable past the backplane in order to circumvent the backplane for data. This would have required a .5" sata power extension cable. I was not able to find anything that small. I believe the plastic ends involved in the sata power connection are just too big to end up with something .5" overall. I did find a single 5v source on the motherboard that was intended for an optical drive. I did not feel comfortable loading a single pair of MB pins with the load of 24 drives (the 2 drives in the back have their own power). SSDs do not draw that much, but 24 of them definitely draw more than a laptop style cdrom. By removing the backplane I freed up 3 power connections with a handful of 12v lines. I purchased 2 12v to 5v converters and fed them off 2 of those connections. I then made 2 custom sets of power cables that each fed 12 of the SSD drives. Each string of 12 drives is tied off of one converter as pictured below.

The connections on the board are covered up by the fan assembly. It is a tight Fight but everything fits nicely.

I used 2 Adaptec 72405 raid cards to connect all of the SSD drives. Each card can do 24 individual 6Gbps ports. Since I had 26 drives I bought 2 and split the load. The back 2 drives are used as a mirror for the OS. I had the cables for the 72405s made to length. Originally all SAS cables run down the right side of the chassis. Going from 2 cables to 6 made that an extremely tight fit. With the backplane removed the left side cable path was basically empty so I ended up splitting the cables 3 on each side. The cable lengths were as follows: For the right side: cable 1: 29.5" total length, p4 would be the longest cable at 29.5", p3 would be 29", p2 would be 28.5" and p1 would be 28" cable 2: 32.5" total length, p4 would be the longest cable at 32.5", p3 would be 32", p2 would be 31.5" and p1 would be 30" cable 3: 33.5" total length, p4 would be the longest cable at 33.5", p3 would be 33, p2 would be 32.5, p1 would be 32" For the left side things are opposite, p1 is the longest cable and p4 is the shortest cable 4: 34.5" total length, p1 would be the longest cable at 34.5", p2 would be 34", p3 would be 33.5", p4 would be 33" cable 5: 31.5" total length, p1 would be the longest cable at 31.5", p2 would be 31", p3 would be 30.5", p4 would be 30" cable 6: 28.5" total length, p1 would be the longest cable at 28.5", p2 would be 28", p3 would be 27.5", p4 would be 27"

I did not take a picture of the SAS cables plugged in, as you can imagine it does clutter up that area a bit, but everything plugs in nicely. Those that know me know I could not finish without a little bling. It is impossible to get a nice picture of this, but you get the idea. *** Update. I have since removed the 24 Samsung SSDs and replaced them with 3 Micron p420m PCIE SSDs. I am currently capped by the 56Gbps Infiniband link and will try 100Gbps Infiniband once it is more commonly available.

|